123 {

124 extern char _end;

126

130 uint64_t kern_pages = total_pages - user_pages;

131

132

133 enum { KERN_START, KERN, USER_START, USER } state = KERN_START;

135 uint64_t region_start = 0, end = 0, start,

size, size_in_pg;

136

139

148

149 if (state == KERN_START) {

150 region_start = start;

151 state = KERN;

152 }

153

154 switch (state) {

155 case KERN:

156 if (rem > size_in_pg) {

157 rem -= size_in_pg;

158 break;

159 }

160

162 &free_start, region_start, start + rem *

PGSIZE);

163

164 if (rem == size_in_pg) {

165 rem = user_pages;

166 state = USER_START;

167 } else {

168 region_start = start + rem *

PGSIZE;

169 rem = user_pages - size_in_pg + rem;

170 state = USER;

171 }

172 break;

173 case USER_START:

174 region_start = start;

175 state = USER;

176 break;

177 case USER:

178 if (rem > size_in_pg) {

179 rem -= size_in_pg;

180 break;

181 }

183 break;

184 default:

186 }

187 }

188 }

189

190

192

193

196 void *pool_end;

197 size_t page_idx, page_cnt;

198

206

207

208

209 if (end < usable_bound)

210 continue;

211

213 pg_round_up (start >= usable_bound ? start : usable_bound);

214split:

219 else

221

228 goto split;

229 } else {

232 }

233 }

234 }

235}

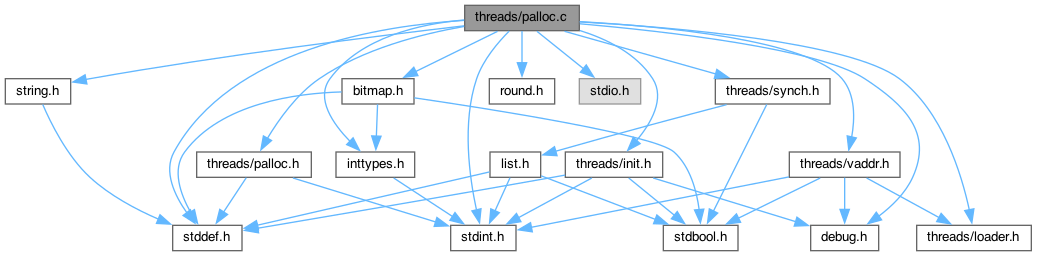

#define MULTIBOOT_INFO

Definition: loader.h:15

uint16_t size

Definition: mmu.h:0

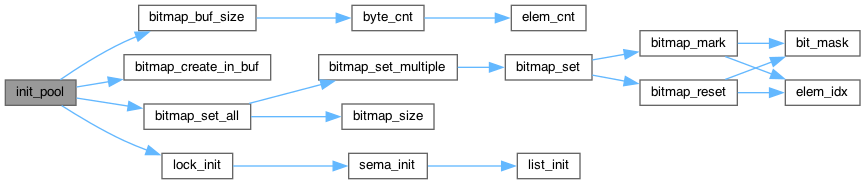

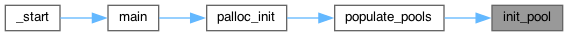

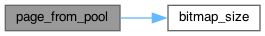

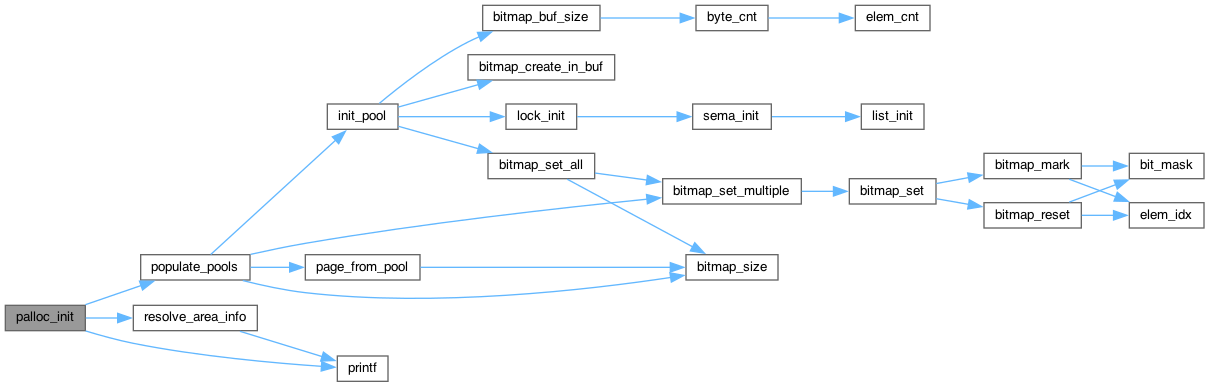

static void init_pool(struct pool *p, void **bm_base, uint64_t start, uint64_t end)

Definition: palloc.c:339

#define APPEND_HILO(hi, lo)

Definition: palloc.c:76

#define ACPI_RECLAIMABLE

Definition: palloc.c:75

#define USABLE

Definition: palloc.c:74

size_t user_page_limit

Definition: palloc.c:40

unsigned int uint32_t

Definition: stdint.h:26

uint32_t len_hi

Definition: palloc.c:62

uint32_t mem_lo

Definition: palloc.c:59

uint32_t len_lo

Definition: palloc.c:61

uint32_t type

Definition: palloc.c:63

uint32_t mem_hi

Definition: palloc.c:60

uint32_t mmap_len

Definition: palloc.c:52

uint32_t mmap_base

Definition: palloc.c:53

#define pg_round_up(va)

Definition: vaddr.h:29

#define ptov(paddr)

Definition: vaddr.h:49